When AI Learns What We Never Taught It: Emergent Intelligence Explained

What happens when a system starts solving problems it was never trained to solve?

Not better. Not faster.

But differently.

This is the uncomfortable frontier of modern artificial intelligence. As models scale, they begin to exhibit emergent abilities. These are capabilities that do not appear gradually, but suddenly. They are not explicitly programmed, not directly trained, and often not even expected.

Translation, reasoning, coding, analogical thinking. Entire cognitive behaviors seem to switch on once a system crosses a certain threshold.

And the most unsettling part?

We don’t fully understand why.

The Pattern That Shouldn’t Exist

Traditional machine learning follows a predictable logic. Improve the data, increase model size, optimize training. Performance improves smoothly. Incrementally.

But large language models broke that expectation.

Researchers observed something strange. Below a certain scale, models fail completely at specific tasks. Then, almost abruptly, performance jumps.

Not by a few percent.

From failure to competence.

This phenomenon was systematically documented in a 2022 study by Wei et al., where models demonstrated emergent abilities only after reaching sufficient scale. Tasks like multi-step arithmetic, logical reasoning, and translation between low-resource languages appeared suddenly, not gradually.

This is not just improvement.

It is a phase transition.

What Emergence Really Means

In complex systems, emergence describes behavior that arises from interactions between components, rather than from explicit design.

A classic example is consciousness arising from neurons. No single neuron contains awareness, but together, they generate it.

In AI, the analogy is striking.

Each parameter in a neural network is simple. A weighted connection. A tiny mathematical adjustment.

But at scale, billions or trillions of these parameters interact. Patterns reinforce each other. Representations become layered. Abstractions form.

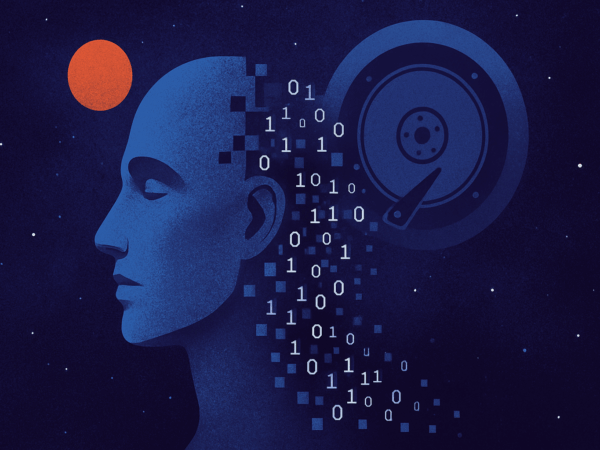

At some point, the system stops behaving like a statistical tool and starts behaving like something else.

Something closer to a reasoning engine.

The Scale Hypothesis

One of the leading explanations is deceptively simple.

Scale changes everything.

As models grow larger, they do not just store more information. They develop new internal structures. Representations become more flexible. Latent spaces become richer.

This is captured in the scaling laws identified by Kaplan et al. and later refined by Hoffmann et al. Performance improves predictably with model size, data, and compute.

But emergent abilities do not follow smooth curves.

They appear discontinuous.

Which suggests something deeper is happening.

Hidden Structure Inside Neural Networks

Modern AI systems are not black boxes in the traditional sense. They are better described as opaque systems with structure we struggle to interpret.

Recent work in mechanistic interpretability has revealed surprising internal organization:

- Neurons that represent specific concepts

- Circuits that implement logical operations

- Layers that transform syntax into semantics

In transformer models, attention heads can specialize. Some track grammar. Others track relationships between entities. Some even appear to implement algorithm-like behavior.

This suggests that large models are not just memorizing patterns.

They are building internal representations of the world.

And once those representations are rich enough, new abilities emerge naturally.

Few-Shot Learning and the Illusion of Understanding

One of the most striking emergent behaviors is few-shot learning.

Give a model a few examples of a task, and it generalizes instantly. No retraining. No parameter updates. Just inference.

This was not explicitly programmed.

It emerged.

In smaller models, few-shot learning fails. In larger ones, it becomes reliable.

The implication is profound.

The model is not just matching patterns. It is inferring rules on the fly.

This blurs the line between training and reasoning.

When AI Starts to Reason

Another emergent ability is chain-of-thought reasoning.

When prompted to think step by step, large models dramatically improve performance on complex problems. They break tasks into intermediate steps and simulate reasoning processes.

This behavior was not hardcoded.

It was discovered.

Even more interesting, the ability only appears beyond a certain model size. Smaller models cannot sustain multi-step reasoning, even when prompted.

Larger ones can.

This suggests that reasoning is an emergent property of scale.

The Phase Transition Analogy

Physicists would recognize this pattern immediately.

Water does not gradually become ice. It reaches a threshold, then freezes.

Similarly, neural networks may undergo computational phase transitions.

Below a threshold, the system lacks the capacity to represent certain structures. Above it, entirely new behaviors become possible.

This reframes AI development.

We are not just optimizing performance. We are crossing thresholds.

The Unpredictability Problem

Emergence introduces a serious challenge.

If capabilities appear suddenly, they are difficult to predict.

This has implications for safety, alignment, and control.

A system that behaves predictably at one scale may behave differently at another. New abilities may appear without warning.

This is why researchers are increasingly focused on AI alignment and interpretability.

Not just to improve performance. But to understand what the system is actually doing.

Are We Discovering or Creating Intelligence?

There is a deeper philosophical question beneath all of this.

Are we building intelligence, or uncovering it?

If emergent abilities arise inevitably from scale and structure, then intelligence may not be something we design in detail.

It may be something that appears when systems become complex enough.

This parallels ideas in biology and physics. Life emerges from chemistry. Consciousness emerges from neural activity.

Perhaps intelligence emerges from computation.

The Limits of Control

One of the most uncomfortable implications is this:

We may not fully control what we are creating.

We can design architectures. We can curate data. We can guide training.

But we cannot specify every behavior.

Emergent systems have degrees of freedom.

They surprise us.

And that means our relationship with AI is shifting. From engineering to exploration.

The Scientific Frontier

Research into emergent abilities is still in its early stages.

Key open questions include:

- What exact mechanisms drive emergence?

- Can we predict when new abilities will appear?

- Are there limits to what can emerge?

- How do we ensure safety in systems we don’t fully understand?

Some researchers argue that apparent emergence may be an artifact of evaluation methods. Others believe it reflects genuine discontinuities in capability.

But the phenomenon itself is undeniable.

Beyond Language Models

Emergence is not limited to text.

Similar effects are observed in:

- Reinforcement learning agents developing unexpected strategies

- Vision models discovering abstract features

- Multi-agent systems exhibiting coordination without explicit rules

This suggests that emergence is a general property of complex learning systems.

Not a quirk of language.

What This Means for the Future

If emergent abilities continue to scale, we may see:

- More generalized AI systems

- Improved reasoning and planning

- Unexpected capabilities across domains

But also:

- Harder-to-predict behavior

- New alignment challenges

- Increased need for interpretability

The trajectory is clear.

AI is becoming less like a tool and more like a system we study.

TL;DR

- Large AI systems exhibit emergent abilities not explicitly programmed

- These abilities appear suddenly after crossing scale thresholds

- Examples include reasoning, translation, and few-shot learning

- Emergence suggests deeper internal structure and representation

- It introduces unpredictability and new challenges for AI safety

- Intelligence may be a natural outcome of complexity

References

- Wei, J. et al. (2022). Emergent Abilities of Large Language Models.

- Kaplan, J. et al. (2020). Scaling Laws for Neural Language Models.

- Hoffmann, J. et al. (2022). Training Compute-Optimal Large Language Models.

- Brown, T. et al. (2020). Language Models are Few-Shot Learners.

- Nye, M. et al. (2021). Show Your Work: Scratchpads for Intermediate Computation.

- Olah, C. et al. (2020). An Introduction to Circuits in Neural Networks.

- Bubeck, S. et al. (2023). Sparks of Artificial General Intelligence.